The last feature of the class; light refraction. I use Snell’s Law for calculating the refracted light-vectors, and Beer’s Law for calculating light absorbance through mediums. I’ve got my render-farm running at full-tilt now, so I was able to get some really nice renders.

AS A NOTE: WordPress seems to be resizing my images when you try to take a closer look. You may have to remove “?w=someNumber” from the end of the URL.

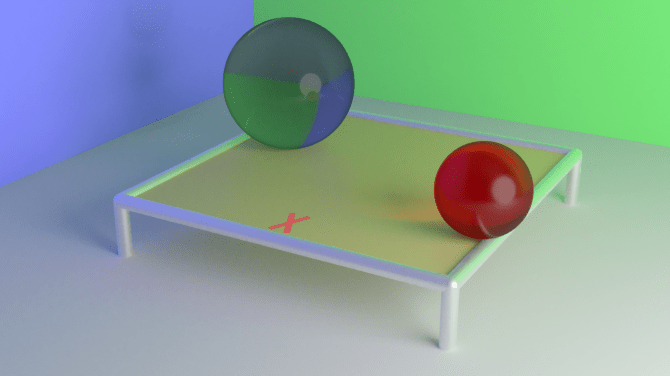

This first render was the default test scene. Not very exciting, but it looks nice! The caustics from the red ball converged nicely, and add that little touch that helps with realism. Statistics:

- Resolution: 1920×1080

- 1024 passes

- Rendered on the render farm

- 45 computers in cluster

- Intel i7-7700s @ 4.2 GHz

- 360 logical cores total

- 45 computers in cluster

- Render time: ~2 minutes

Now, this image is a different version of the well-known Cornell Box. I saw another render online of a similar scene and really liked it, so I made a copy. The final product came out really nice, I wish I had made the spheres a little less reflective, but it’s still a really nice image. Notably, the sphere acted as a sort of lens, focusing the light onto the floor. Stats:

- Resolution: 1920×1080

- 8192 passes

- Rendered on the render farm

- 45 computers in cluster

- Intel i7-7700s @ 4.2 GHz

- 360 logical cores total

- 45 computers in cluster

- Render time: ~15 minutes

For future work, I’d love to change how the load-balancer works for my render-farm. It doesn’t handle complex scenes very well, and a few computers tend to get swamped with the most complicated parts of the scene. I think I might try some more similar to how Maya handles render-farms, in which each client in the farm renders a whole frame. What I would do is instead of each client rendering a chunk, I’d have each one render a given number of passes over the whole screen, with the final result being an additive blend of all outputs. Since each computer would have an identical load, they’d probably complete around the same time (assuming similar hardware). However, this would increase the amount of data sent over the network (which isn’t necessarily a problem I need to worry about).

I’m also toying with having the clients pull and render “patches” of the scene (small 64×64 chunks perhaps). This would allow clients to join and leave without major problems. Theoretically, I could then hook this up in AWS, allowing for EC2 instances to be spun up and connected automatically. This would be really cool, as all I would have to do is start a render and everything else would be handled automatically.

As far as rendering features, I have a laundry list. I want to try and upgrade my path-tracer to either use Metropolis Light Transport, or Bidirectional Path Tracing. Additional features I’m also interested in are anisotropic specular highlights, sub-surface scattering, and maybe even compensating for the scattering of the light spectrum through mediums. My goal would be to render an animation, something short.